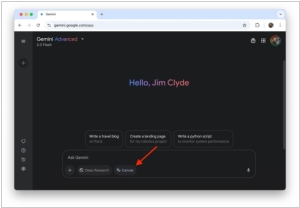

Google Gemini

Google's multimodal generative neural network. It can answer questions, create text, generate code, images and videos.

Add comment

Add comment

Alternatives and relevant products

Users who were interested in Google Gemini, then also viewed:

News about Google Gemini

19.11.25. Google launched Gemini 3 with record-breaking benchmarks

Google has released a new version of its LLM model, Gemini 3, which is now available through a mobile app and search engine. According to its creators, it is a contender for the title of the most powerful AI model on the market today. This is despite the release of the new version of OpenAI GPT 5.1 a week ago and the release of Anthropic Sonnet 4.5 two months ago. They particularly advertise its reasoning ability, which is demonstrated in independent benchmarks. With a score of 37.4, the model achieved the highest result in Humanity's Last Exam, a benchmark designed to assess general skills and knowledge. The previous record, set by GPT-5 Pro, was 31.64. Gemini 3 also topped the LMArena rankings, a benchmark developed with human input and measuring user satisfaction. In addition to the basic model, Google also released the Google Antigravity system, which allows the creation of AI coding agents compatible with IDEs such as Warp or Cursor 2.0.

2025. Google Adds Canvas to Gemini

Gemini has introduced Canvas, an interactive workspace designed to make writing and programming more comfortable and efficient. Competitors ChatGPT and Claude introduced a similar feature six months ago. Using Canvas, you can create drafts of texts and quickly edit them with Gemini's feedback to improve clarity, tone, length, or formatting. This feature is especially useful for content creators who need to refine their texts without switching between multiple tools. For developers, Canvas is an equally powerful tool. It can generate, debug, and explain code, facilitating the iteration of your projects.

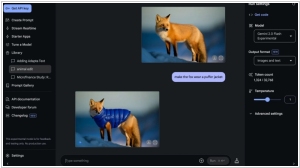

2025. Google's Gemini 2.0 Flash now allows to edit images

Google'sGemini 2.0 Flash model (which runs on Google AI Studio) now enables image editing using natural language. Unlike earlier multimodal systems that used a combination of separate models (for example, using a language model alongside Imagen 3 to generate images), Gemini 2.0 Flash operates multimodally, generating images directly in the same system that processes text. This eliminates the need for cross-model communication, significantly reducing latency. Because Gemini 2.0 Flash no longer relies on Imagen 3, it has faster response times and a smoother experience. You can even add long text directly to images!

2024. Gemini 2.0 allows to generate images, audio and execute code

Google has unveiled a new version of its AI model, Gemini 2.0 Flash, which can generate not only text but also images and audio. Furthermore, the model is capable of working with third-party applications and services, such as Google Search, executing code, and much more. According to Google, Gemini 2.0 Flash is twice as fast as the previous version, Gemini 1.5 Pro, and significantly improves performance in tasks related to programming and image analysis. Along with the announcement of Gemini 2.0 Flash, the company also introduced the Deep Research feature, which allows AI to scan web pages and generate analytical reports based on an initial query. Compared to the first version of Gemini, the new model has improved reasoning abilities, understands more complex instructions, supports working with longer contexts, and has become more "agentive"—that is, capable of performing multi-step tasks independently, upon user request.

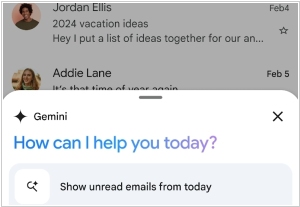

2024. Gmail users on Android can now chat with Gemini about their emails

Google introduced a new functionality called Gmail Q&A, enabling Gmail users on Android devices to chat directly with Gemini about their emails. For instance, you can request Gemini to summarize emails by saying phrases like, “Update me on the emails about quarterly planning.” Additionally, you can utilize the feature to search for particular details, such as asking Gemini, “What was the company’s expenditure on the last marketing event?”. Gmail Q&A is rolled out to users who pay for Gemini $20 a month It’s improbable that Gmail Q&A will be available to free Gmail users in the near future. Instead, Google is promoting features like Gmail Q&A to persuade users that the steep monthly subscription fees for Gemini are justified. The company is also integrating Gemini into all its existing products, including Google Docs, Google Calendar and more — though it all comes at a cost.

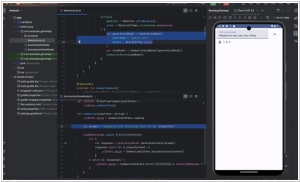

2024. Google rolls out Gemini in Android Studio for coding assistance

In a move that might make even the most cynical developer raise an eyebrow (and then quickly lower it again), Google is steadily weaving its Gemini magic into all manner of things, this time gracing Android Studio with the newly christened "Gemini Pro." This rather clever little bot has taken up residence in the IDE like an enthusiastic intern, fielding coding questions with an alarming eagerness to assist. Google, ever the charmer, insists that Gemini Pro is now even better at code completions, debugging, resource-hunting and writing the kind of documentation that might, for once, not induce mild panic. Privacy, of course, remains sacrosanct—or at least as sacrosanct as things get in this day and age—requiring developers to log in and specifically say, "Yes, I want the bot." And if that weren't enough android Studio thoughtfully hands developers a ready-to-go Gemini API starter template, as if to say, "Go on, then—get cracking with this generative AI wizardry in your apps!"

2024. Google Bard renamed to Gemini. Google Assistant switched to Gemini engine

Demis Hassabis's Deepmind team has won a victory within Google. The Gemini model will completely replace the Bard model, developed in-house. The Bard language chatbot has already been renamed to Gemini, and the Google Assistant has switched to the Gemini engine (though currently only in English). Google claims that Gemini matches and even surpasses OpenAI's GPT-4 neural network in many respects. Bard currently uses Gemini Pro, the mid-range model in the Gemini series. Gemini is said to process information like the human brain and outperform all existing neural networks in any domain. Language in this AI model is just one of the information formats, along with code, images, audio, and video.

2023. Google Bard can now watch YouTube videos for you

With the latest update, Google Bard now possesses the almost bewildering ability to grasp the inner workings of YouTube videos, leaving users in a position to fire off questions about specific content without even having to endure the tiresome burden of pressing play. One can now dive into the murky depths of intricate follow-up questions, demanding summaries and nitpicky details from Bard's AI brain, which presumably has more time on its circuits than we mere mortals. Of course, this thrilling leap forward has also sent shivers through online communities, where some are already fretting that video educators could soon find themselves out of a job, victims of this overly clever chatbot. To add to the delightful sense of impending doom, the update has also rekindled the usual anxieties about privacy and content ownership, as Bard stretches its virtual fingers towards ever more tasks, making some wonder if there's anything it can’t do.

2023. Google Assistant is getting AI capabilities of Bard

Imagine, if you will, that the Google Assistant has somehow evolved into an entity of mildly alarming brilliance, now backed by a rather cerebral companion named Bard, gifted in all things generative AI. The two, working together like a digital odd couple, can now tackle everything from peculiar inquiries to the deeply philosophical and mundane. Fancy a rummage through your Gmail for unread missives of the past week? Fear not! This dashing duo can do just that—provided you’ve given them permission to rummage, of course. But they won’t stop there; no, they’re up for all manner of personal pursuits: planning epic voyages, crafting grocery lists and even composing delightful little snippets for your social media feed. This, however, is a mere flirtation with the future, as Google intends to gently observe how users engage with this digital symphony of assistance before making it a universal upgrade for Android and iOS alike.